iHap: An Interactive Haptic-based Application for Active Exploration of Facial Expressions

Contact

Members

Dr. Troy L. McDaniel

Dr. Troy L. McDaniel

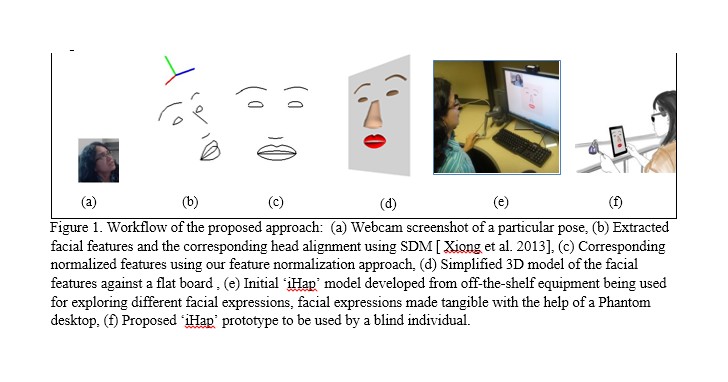

People who are blind have the inherent tendency of exploring their interaction partner’s face to know them better and feel closer. This becomes a necessity when partners become engaged in a conversation and want to be aware of the different facial expressions. Current technologies can deliver coarse-grained abstract haptic cues to the visually impaired. A better solution is needed – one that allows a blind individual interpretation of others’ facial expressions while engaged in bilateral conversation. To meet this goal, the development and evaluation of a platform called ‘iHap’ is proposed - an interactive hapticbased “Explore-learn-interact” paradigm that enables a blind individual to access his own facial expressions in a dynamic virtual environment. It is hypothesized that persistent haptic exploration of different movements of a blind individual’s own facial features for different expressions in the virtual environment will help him master the haptic language of facial expressions. Hence he will be able to explore others’ facial expressions/ emotions while engaged in social interaction. As a blind individual makes active use of the sense of touch through feature by feature analysis, emphasis has been given to feature-based active exploration of facial expressions. Our initial pilot test mainly focuses on exploring/ deciphering the “haptic language” of facial expressions in an interactive platform via real-time graphic/haptic updates of simplified facial features. The application, in its current state, is to be tested and evaluated by the target group.