Person Recognition using Multi-modal Biometrics - Face and Speech

Contact

Members

Dr. Sethuraman "Panch" Panchanathan

Dr. Sethuraman "Panch" Panchanathan

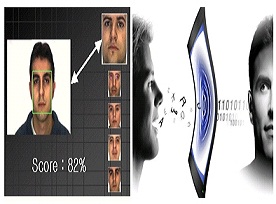

Automated verification of human identity is indispensable in security and surveillance systems and also in applications involving assistive technology. Uni-modal systems relying on a single modality for authentication suffer from several limitations. Multi-modal systems consolidate evidence from multiple sources and are thus more reliable. The main objective of this project is to develop a robust recognition engine based on the face and speech modalities. Biometric authentication schemes based on audio and video modalities are non-intrusive and are therefore more suitable in real-world settings compared to the intrusive methods like fingerprint and retina scans. This is a collaborative project that brings in the expertise from two different fields - face based recognition (at ASU) and speech based recognition (at Tecnologico de Monterey, Mexico).